AWS Multi-Account Strategy and Landing Zone

This article provides information on AWS multi-account strategies and the necessary services required for constructing a landing zone with guide visuals.

AWS multi-account strategy is a powerful method of managing multiple AWS accounts within an organization. It is designed to help organizations scale and manage their cloud infrastructure more effectively while maintaining security and compliance. In this article, we will explore the key components of an AWS multi-account strategy and how it can be implemented to achieve better control and efficiency in managing cloud resources.

Why Multiple Accounts?

- Security controls: Each application could have different security controls, like within same organization, PCI-DSS will have different security controls than other applications.

- Isolation: Isolation is crucial to prevent potential risks and security threats that may arise from having multiple applications in the same account.

- Many teams: Using multiple accounts prevents team interference, as teams with different responsibilities and resource needs are separated.

- Data Isolation: Isolating data stores to an account limits access and management of data to a select few, reducing the risk of unauthorized exposure of sensitive information.

- Business process: Individual accounts can be created to cater to specific business needs since business units or products often have different purposes and processes.

- Billing: The multi-account approach allows for the creation of distinct billable items across business units, functional teams, or individual users.

- Quota allocation: Each account, including projects, has a well-defined and individual quota since AWS quotas are established on a per-account basis.

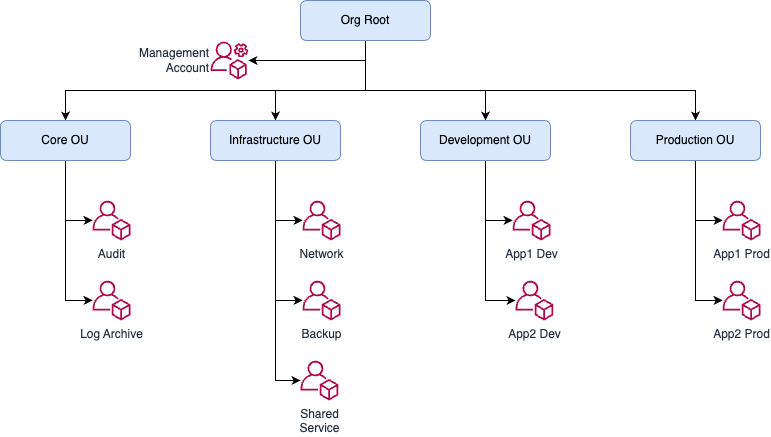

Design OU Structure

An organizational unit (OU) is a logical grouping of accounts in your organization, created using AWS organizations. OUs enable you to organize your accounts into a hierarchy and make it easier for you to apply management controls. AWS organizations policies are what you use to apply such controls. A Service Control Policy (SCP) is a policy that defines the AWS service actions.

When building this structure, a key principle is to reduce the operational overhead of managing the structure. To achieve this, we minimized the depth of the overall hierarchy and where policies are applied. For example, Service Control Policies (SCPs) are primarily applied at the OU level, to ease troubleshooting, instead of at the account level.

SCPs are used to enforce organizational policies that apply to multiple accounts, and they are created and applied at the organizational unit (OU) level. By using AWS tagging, you can apply policies to specific resources based on their tags, which can help you more effectively manage and secure your resources.

The following diagram illustrates the common OU structure for enterprises.

Design

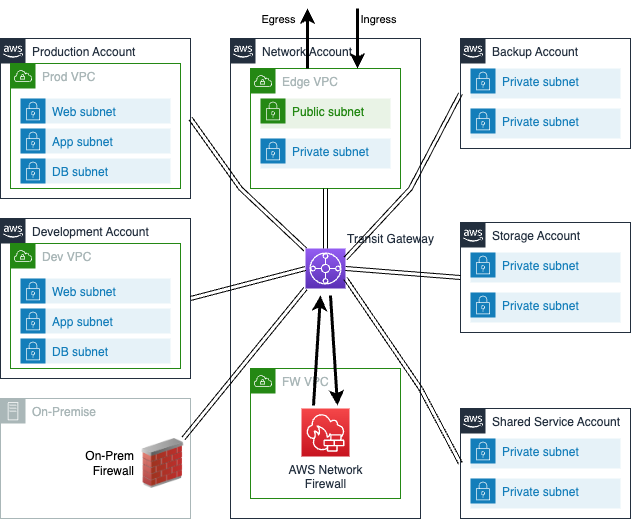

Central Firewall and Network

Many AWS customers adopt AWS Control Tower as a key part of their multi-account strategy, aiming to achieve business agility and centralized governance. Once deployed, customers seek to leverage the benefits of AWS Control Tower, such as the creation of a secure and scalable networking architecture, which can be seamlessly integrated with new accounts and VPCs.

To achieve a scalable multi-account, multi-VPC architecture, customers can utilize AWS Transit Gateway. They can also use ingress and egress VPCs to control connectivity to the internet and on-premises network via either an AWS Site-to-Site VPN connection or AWS Direct Connect. This centralizes network governance and control, as all networking requirements are managed from one central location: the networking account.

These components are integrated so that all traffic originating from VPCs is directed to AWS Network Firewall before being sent to its final destination, whether that be another VPC, an on-premises network, or the internet via the egress VPC. Similarly, all traffic coming from the internet to a VPC via the ingress VPC is first routed to AWS Network Firewall for inspection before being sent through the transit gateway to the destination VPC.

The following diagram illustrates the central inspection firewall in network account.

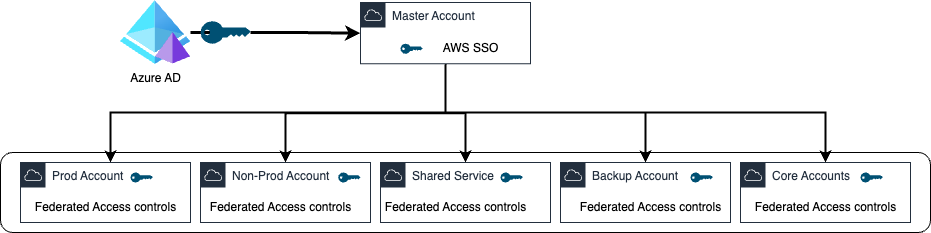

Identity and

Access Management

AWS Federation allows organizations to simplify user management, improve security, and enable easier auditing and compliance by integrating with an identity provider like Okta, Azure AD. This integration enables users to use a single set of credentials to access both Azure and AWS resources, while also allowing administrators to control user access to AWS resources based on their role or job function.

AWS Federation with Azure is a method of enabling users to access resources on Amazon Web Services (AWS) using their credentials from Microsoft Azure Active Directory (Azure AD). This integration allows organizations to provide a seamless experience for their users, who can use a single set of credentials to access both Azure and AWS resources.

The integration involves setting up Azure AD as an identity provider for AWS, configuring roles and permissions, and testing the configuration to ensure it works as expected. By using Azure AD as the identity provider with AWS SSO, organizations can centralize the management of user accounts and improve security, as access to resources is granted only to authorized users.

The following diagram illustrates the Azure AD integration with AWS master account.

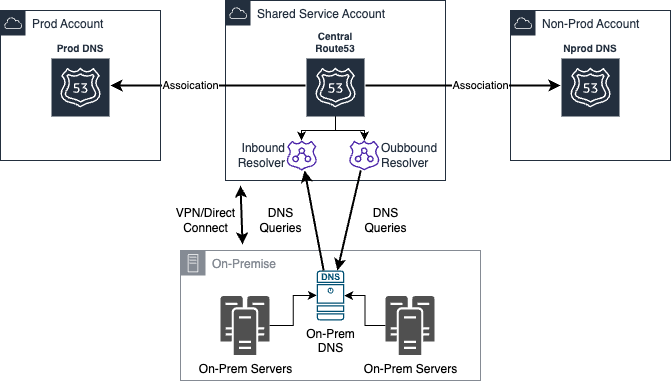

A central private hosted zone in Route 53 is a hosted zone that is shared across multiple AWS accounts. It allows you to create a single DNS namespace that can be used across all the VPCs and accounts within your organization. This means you can use the same domain names and DNS records to reference resources in different accounts or VPCs, without having to create duplicate hosted zones or configure cross-account access.

To create a central private hosted zone in Route 53, you first need to create the hosted zone in one of your AWS accounts. You can then share the hosted zone with other AWS accounts by creating a resource share in AWS Resource Access Manager (RAM). Once the resource share is created, you can invite other AWS accounts to join the resource share and provide them with the necessary permissions to manage the hosted zone.

You can use inbound and outbound resolvers to send and receive DNS traffic from any on-prem DNS server.

The following diagram illustrates the central DNS server in AWS.

Infrastructure Automation

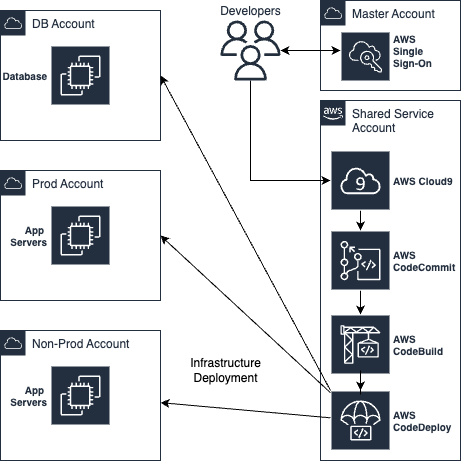

Infrastructure as Code (IaC) is a methodology for defining and managing infrastructure and application architecture in code. AWS CloudFormation or Terraform can be used to define the infrastructure and application architecture in code, which can then be saved in a version control system like AWS CodeCommit, Git.

To manage and edit the code stored in AWS CodeCommit, use AWS Cloud9, which provides a cloud-based development environment that can be accessed from anywhere.

AWS CodeBuild can be used to automatically build the code whenever changes are made to the code in AWS CodeCommit. It can compile, test, and package code. AWS CodeDeploy can be configured to automatically deploy the code to the infrastructure whenever a successful build is made by AWS CodeBuild.

To integrate all the above tools, set up a CI/CD pipeline that uses AWS CodePipeline to automate the process and make it easier to manage the whole pipeline. AWS CodePipeline allows you to define the stages of your pipeline, the actions that take place in each stage, and the triggers that start each stage.

Once the infrastructure is provisioned using AWS CloudFormation or Terraform, run integration tests on the deployed application to verify its functionality. Finally, deploy the application to production using the CI/CD pipeline, which can automatically trigger the deployment based on predefined rules or manual approval.

The following diagram illustrates the CI-CD for AWS infrastructure.

Also, AWS services like AWS Security Hub and AWS Config Rules to automate security checks and ensure your accounts are always in compliance with your security policies.

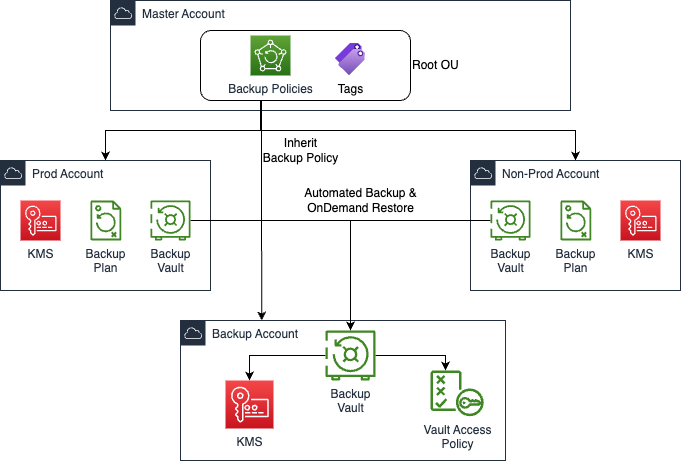

Central Backups

Central backup can be implemented in various ways, such as using a backup server or cloud-based backup solutions like AWS Backup. With AWS Backup, users can create backup policies for multiple accounts and regions and manage them centrally from a single AWS Management Console. This approach ensures consistency and reliability in backup policies, while simplifying management and reducing the likelihood of errors.

With AWS Backup, you can automate backup scheduling, retention policies, and data lifecycle management for various AWS resources such as EBS volumes, RDS databases, DynamoDB tables, EFS file systems, and more.

To set up a central backup using AWS Backup, you need to create a backup vault, create a backup plan, assign resources to the backup plan, monitor your backups, and restore your data as needed. AWS Backup provides a consolidated view of backup activity across your entire AWS environment, making it easier to manage and monitor your backups.

The following diagram illustrates the cental backup.

AWS Central Logging is a service that allows you to collect, store, and analyze logs generated by various resources in your AWS environment. It provides a centralized location for storing logs, which can be accessed and analyzed by authorized users.

To use AWS Central Logging, you first need to set up a logging destination, such as Amazon S3 or Amazon CloudWatch Logs. You then need to configure your AWS resources to send their logs to the logging destination like AWS Opensearch.

Once logs are being sent to the logging destination, you can use AWS Central Logging to analyze and query the logs. This can help you troubleshoot issues, monitor performance, and detect security threats.

Amazon OpenSearch is a managed search and analytics service that allows you to search, analyze, and visualize data. It is based on the popular Elasticsearch and Kibana open source software, and is fully compatible with the Elasticsearch API.

The following diagram illustrates the central logging in AWS.

Central Cloudwatch Monitoring

CloudWatch provides a centralized view of your AWS resources and application performance, allowing you to monitor metrics such as CPU utilization, network traffic, and database connections. You can also create custom metrics to monitor specific aspects of your application or service.

CloudWatch also allows you to monitor logs generated by your resources and applications, and provides the ability to search, analyze, and visualize the logs in real-time in your central monitoring account. This can help you troubleshoot issues and identify trends in your application or service.

Conclusion

An effective AWS multi-account strategy can help organizations better manage their cloud resources, providing greater control, security, and efficiency. By carefully considering the account structure, IAM policies, networking and connectivity, security and compliance, and cost management, organizations can create a cloud environment that is well-suited to their specific needs.

We Provide consulting, implementation, and management services on DevOps, DevSecOps, Cloud, Automated Ops, Microservices, Infrastructure, and Security

Services offered by us: https://www.zippyops.com/services

Our Products: https://www.zippyops.com/products

Our Solutions: https://www.zippyops.com/solutions

For Demo, videos check out YouTube Playlist: https://www.youtube.com/watch?v=4FYvPooN_Tg&list=PLCJ3JpanNyCfXlHahZhYgJH9-rV6ouPro

If this seems

interesting, please email us at [email protected] for a call.

Relevant Blogs:

Examining the Strengths and Applications of Modern Security Models

Untold Benefits of Application Modernization

RSA Algorithm: A Trusted Method for Encrypting and Securing Data

Recent Comments

No comments

Leave a Comment

We will be happy to hear what you think about this post